Docker image optimization: Tips and Tricks for Faster Builds and Smaller Sizes

I have been making a couple of changes in the build and deployment process of The Urbanlibrary. Notably, I opted to switch to docker altogether because going to the /etc directory to change some configurations was getting tedious.

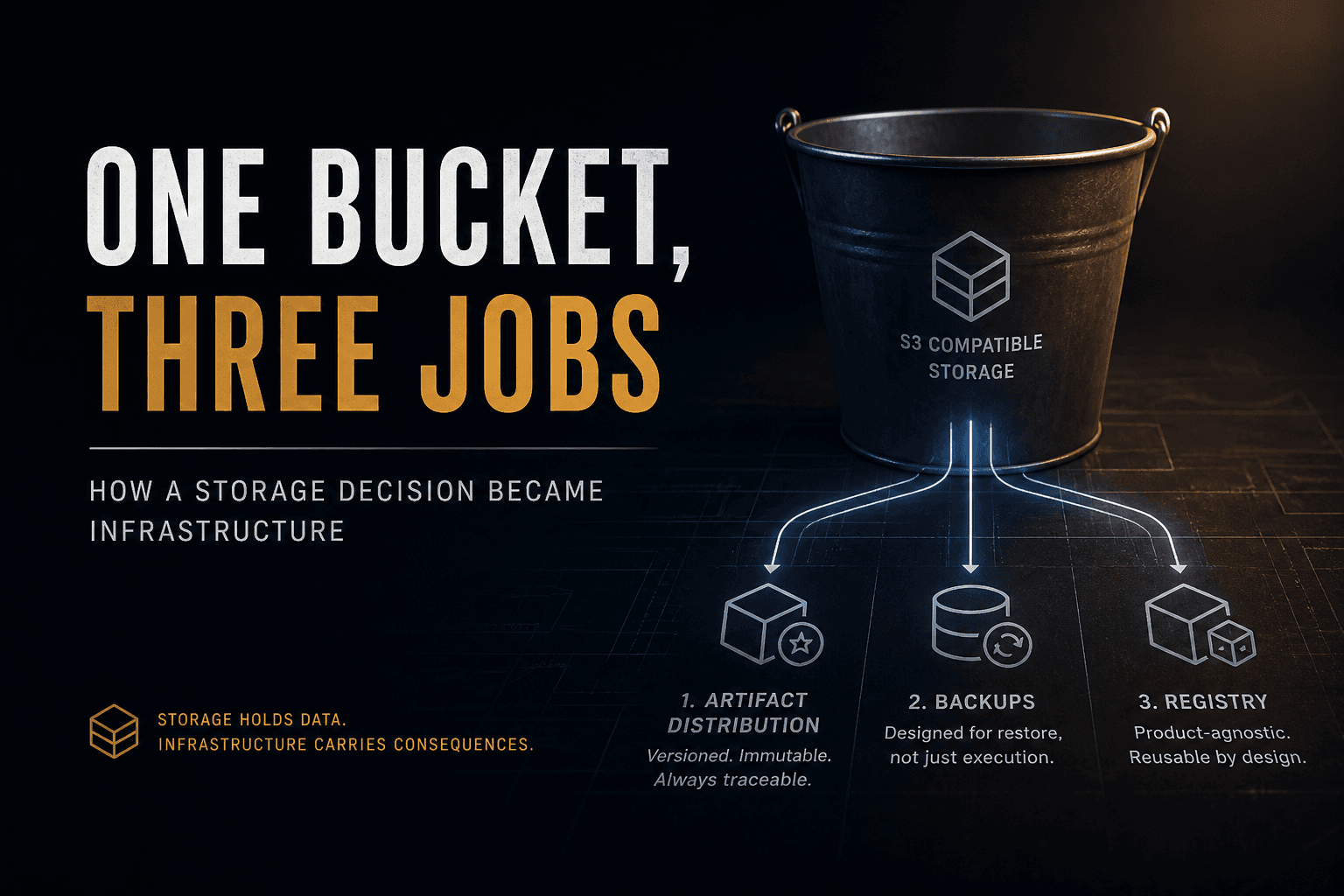

I needed a central place for all this. I needed a localized address where I could get all my code and the requirements to, well, ‘just work’.

Scenario:

I deploy projects A and B to the same server. We might have two domain names, or each might use a different subdomain. To be sure, or if I wanted to change them, I would have to modify the nginx file(s) on the server.

Would it not be easier to look at these locally such that I deployed with confidence each time?

Come Ye Docker

Consequently, I packaged my projects into their respective images and deployed them. All the while, I was keen on performance and memory consumption.

Questions:

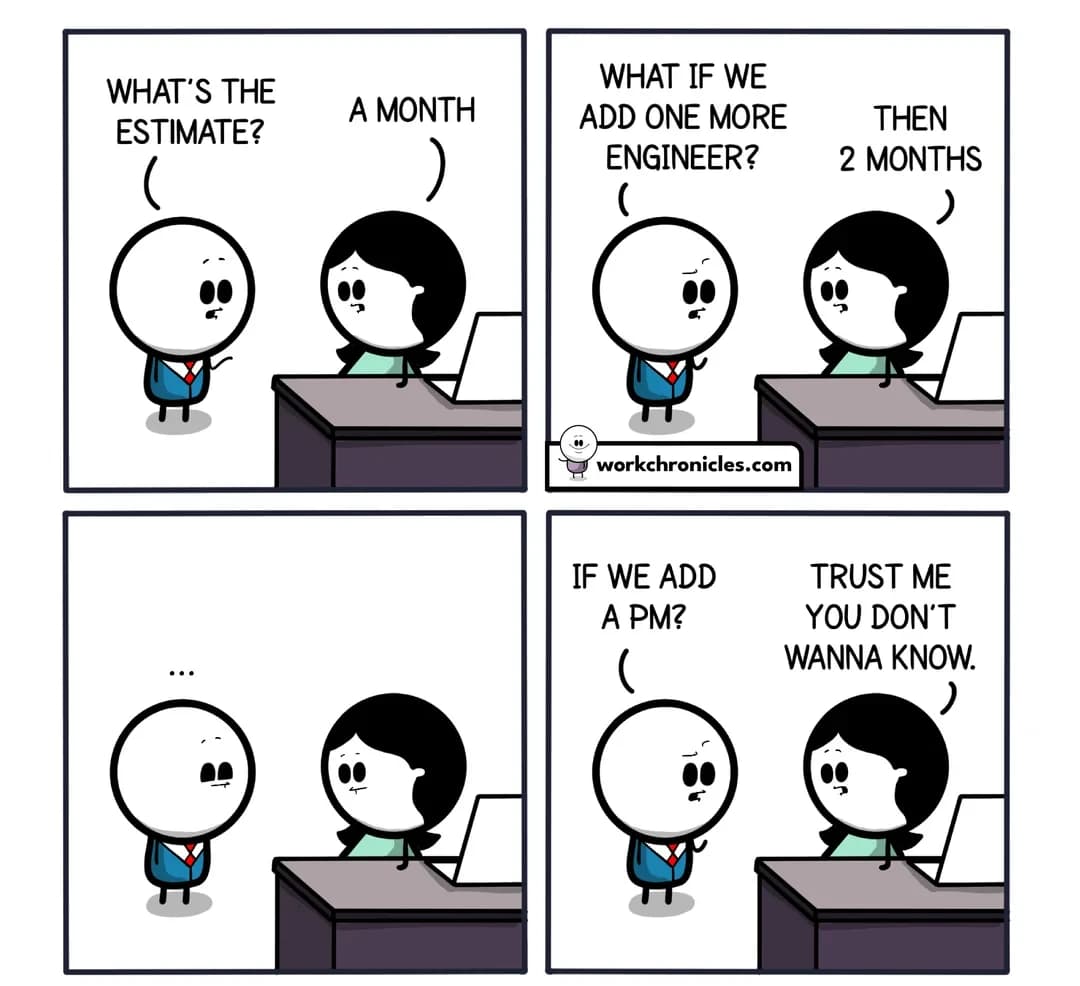

How long does it take to build?

How big are the respective images?

What does CPU usage look like?

Are they truly that much safer than bare metal?

In this journey of discovery, I built upon the following recommended practices of containerization - modifying each to suit what it needed to accomplish.

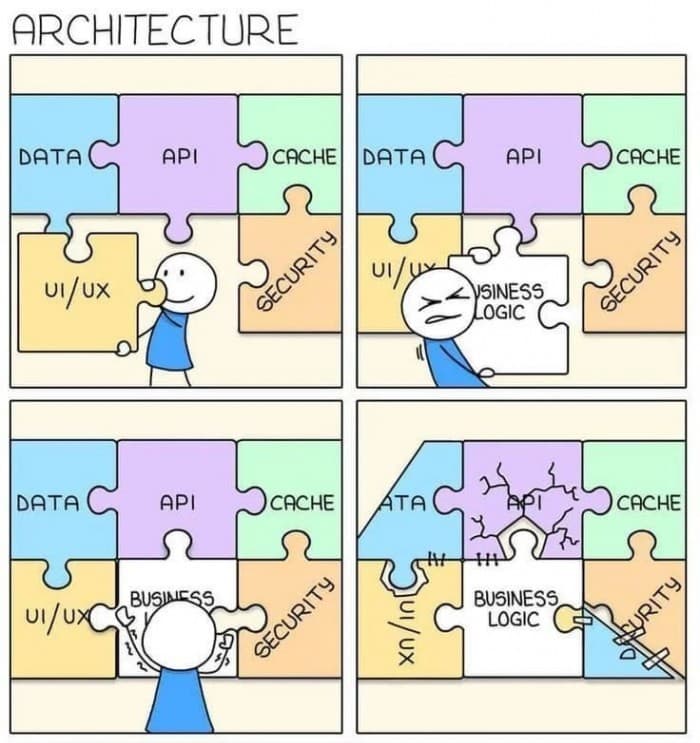

Minimizing the number of layers

At its core, docker sits on the premise of layers. Case in point; creating a software engineering project will require an operating system, the core components that will let your programming library/language work, the programming language itself and the files that you write.

Similarly, when you go the docker way, you create a system where you are in control of these layers. Do you need library X or would Y work better? What about cron tasks? Does my system require cron or curl to be installed somewhere within?

Across the commands that we have in docker files, the following modify the image size:

COPY

RUN

ADD

What is common amongst the above is that they involve the movement of files either from the internet (RUN or ADD) or within your directory(COPY).

Example:

Take the following docker file.

# many layers

FROM python:3

RUN python -m pip install --upgrade pip

RUN python -m pip install --upgrade setuptools

RUN pip install -r requirements.txt

To optimize this, we can merge multiple RUN commands and or use ADD instead

Image 2:

FROM python:3

# notice the merge of RUN commands TO update the system and install any packages needed

RUN python -m pip install --upgrade pip \\

&& python -m pip install --upgrade setuptools \\

&& pip install -r requirements.txt

You notice that every time we run either of the commands highlighted above, the image size changes. The same does not apply to other docker file commands (CMD, ENTRYPOINT)as they are limited to creating intermediate layers (0 bytes).

Choosing the right image

When it comes to choosing a base image for your containers, it is advised to use an image that matches your environment as closely as possible.

Example:

If deploying a java-based application, you would use the JDK image upfront rather than using an ubuntu-based image and installing the JDK on top of it. In this case, your Dockerfile would look as below:

FROM ubuntu:latest vs FROM openjdk:latest

This way, you free the image from having to install some apt dependency W all because it was needed by some background task that is recommended.

Using the exact image tag rather than choosing the latest tag for the image base

Correct. The previous Docker image file is wildly flawed.

Example:

Consider for a moment, that we are running a Django 1.17 project. However, our Dockerfile insists that it wants to build based on the latest version of python out there, so we add the following image.

FROM python:latest

What are the project dependencies that were deprecated? What module was moved or merged? What package had to change because the libraries of the latest python version no longer behave the same?

Instead, you could run, your product with a specified version:

FROM rustc:1.64.0

(The above snippet assumes you are a rustacean, but the same principle applies to any code base you might have).

Using a minimal-sized base image

Getting back to layers, an image can be built based on another. To this end, your stack of choice, say python3 might come as is, that is python 3 or be based on the Debian or alpine images. These image versions can appear as below:

python:3.9.16

python:3.9.16-slim

python:3.9.16-alpine

python: 3.9.6-slim-bullseye

To identify the base of your images, you observe the tags that follow. In the above case, images tagged with the keywords alpine are based on alpine-linux while those with the tags Jessie, stretch, buster and bullseye rely on Debian 8, 9, 10 and 11 respectively. The tag, slim*, is the trimmed version of the default image, that is, python:3.9.16 and may be included in either tag, Debian or just python default tags.

Note: The names jessie, stretch, buster and bullseye are not random but rather the actual names of the Debian Linux versions released at that time. Thus, if we had a new Debian linux version named, strange-happenings, we would consequently get a python image tagged: python3.9.16-strange-happenings.

Of the mentioned, the safest bet is on using the default image. In this case, python:3.9.16. We use alpine when we want to have the bare minimum setup and are willing to install only the required packages manually.

Remember, however, that alpine-based images will have challenges in terms of libc dependencies ( musl-based vs not glibc). For reference, these are common C libraries that you might find in data science packages like pandas, scipy and so forth.

Let’s take a look at how these images might compare by pulling and doing an image size comparison.

From your terminal, pull the images, replacing the tags where needed.

docker pull python:3.9.16

docker pull python:3.9.16-slim

docker pull python:3.9.16-alpine

# add --quiet flag to keep the logs out of your screen or at a minimal

docker pull --quiet python: 3.9.6-slim-bullseye

To compare their sizes:

docker images

A great thread that you might be interested in is this: Super small images based on alpine linux. To cap a comment that stood out for me:

As another example, a co-worker recently was working with some (out-of-tree) gstreamer plugins, and the most convenient way to do so was with a docker image in which all the major gstreamer dependencies, the latest version of gstreamer, and the out-of-tree plugins were built from source. The offered image was over 10GB and 30 layers, took quite a while to download, and a surprising number of seconds to run. With just a few tweaks it was reduced to 1.1GB and a handful of layers which runs in less than a second. It was just a total lack of care for efficiency that made it 10x less efficient in every way, enough to actually reduce developer productivity … ploxiln on Dec 23, 2015

Remember; choose the right image based on your needs.

Use multi-stage docker files

The concept of multi-stage docker files entails breaking your project build process into more than one. In this case, if building a project with say golang, the build files and the dependencies needed for them would be on one image while the compiled file would be on another, ready to be run.

Likewise, if building a python project, the installation of the dependencies would be on one image while the actual production-ready application would be on another. The result would look something like this.

You can read more about this in the piece: Multi-stage docker files vs builder patterns

Thought Digest: Did you know that when a Docker image is first built or used, Docker retains components that haven't changed, so it doesn't have to rebuild everything from scratch again? That’s called caching.

Only install the required dependencies

Alluding to installing the image that closely resembles the stack you are using, having only the required dependencies is key. For instance, using an alpine-based image and installing git, node js, and java jdk to a program that needs none of them is baggage brought forward.

Have a .dockerignore file

Your gitignore file knows to keep node_modules out of version control. Your docker image, however, does not. We keep any items that the image might add during installation later on or items that are not needed for the docker image to function.

Running your docker container commands as a non-root user

Every time we create a Dockerfile and build its image, the base-image file places you in the place of the root user. That is, you know what you are doing. This leaves you vulnerable to attackers(users/processes) that can gain access to your host system. They can, consequently access the project files, copy or add more scripts and so forth.

In the same way, you are not expected to use a VPS as root nor do I expect to see the almighty # on your terminal(an indication that you are navigating as root).

To avert this, you create a non-root user in your docker image by adding a USER directive to your Dockerfile.

For example, to create a non-root user called appuser101:

FROM python:3.9.16

# Create user

RUN useradd -m appuser101

# Set user as default

USER appuser101

To run commands in the container as this new user, you can use the docker exec command as below:

docker exec -u appuser101 -it <container_name> <command>

Where we stand

So far, I have significantly reduced the sizes of my images and their build times as well as improved their maintainability. My billing has also changed. I get the room to have one or more projects for the price of one.

Is there more? I think so. I am pushing this to see how it goes but I will come back with more along this trail.

A word to the wise:

Caching is your friend. Caching is here to stay.